tl;dr;

Ente desktop now has an experimental option in advanced settings to enable on-device ML. When enabled, Ente will run on-device ML to index photos for object and face search. These indexes are currently not synced to the server, and thus will need to be recreated later.

Behind the scenes we've been working on adding on-device machine learning to ente, and using it for searching and grouping photos by the contents of the photos, including the faces of the people in them.

And we've made progress indeed: we've had a working prototype with this in ente desktop since last year. Our plan had been to release this on Ente desktop so that power users can start using it, and then subsequently add support for it in the mobile app.

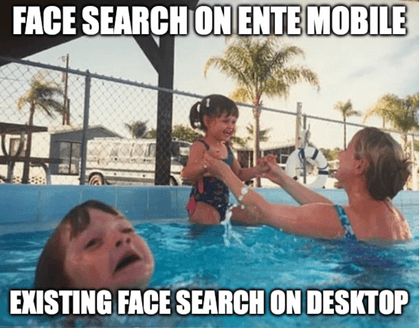

However, after talking to some customers, and introspecting on how we ourselves use Ente, we realized that mobile is where this is perhaps more useful.

So as we started work on the mobile version of this (some exciting news on that front very soon!). But in the meanwhile the desktop version never got released.

It wasn't that we forgot about it though 😬 There's a technical reason why we didn't release it. To explain this reason, let us first provide a bit of background on what we're trying to do.

Edge AI is the term commonly used to describe running AI (usually ML, machine learning) on the customer's own device instead of doing it on servers. The reason for this is not to save server costs – the reason for this is to preserve the customer's privacy.

With end-to-end encrypted services like Ente, even if we wished to, we cannot perform ML on the servers because the server does not have access to the unencrypted data.

So we could shrug and say, well, we'll just not provide features that use ML. Unfortunately, this approach is not feasible either. Customers are used to the face search and other forms of object recognition in other non-e2ee products, and they carry over that expectation when they come to Ente.

Surprisingly, there are some ways to perform ML on encrypted data! Using the techniques of "Homomorphic Encryption" it is possible to perform ML on the cloud while preserving data privacy. However, while it is surprising that such a thing is even theoretically possible, in practice this is not yet at a stage where it can support performant object recognition and face search.

So that leaves only one option. Perform the ML on the customer's own device.

This option would've been a non-starter even a decade ago, but a lot has changed in recent years and phones are much more powerful now. The manufacturers, including and especially Apple, have realized that on-device ML is the future – server-side ML is too privacy invasive – and have been working in recent years to provide hardware that can support on-device ML.

Newer phones come with special chips for this purpose, and these chips are also integrated into the phone's power management, so it is possible to use state of the art ML models on the phone without draining the user's battery.

But while the hardware has made great progress, the software side hasn't, and the quality of off-the-shelf libraries for on-device ML isn't spectacular. This is primarily because the ML research community still primarily focuses on server-side ML. This is starting to change, but slowly.

However, luckily, while we couldn't have done much about the hardware, we know a thing or two about software 😊 so lack of off-the-shelf solutions isn't preventing us from adding on-device ML to Ente. We're working towards adding the following features:

The ability to group all photos of a specific person

The ability to search for objects in photo contents ("ente, show me all photos of sunsets")

We have determined basic feasibility of these features on mobile too, and can foresee a way to do the mobile ML search itself in a fast and efficient manner.

But before doing the search itself, we need to first run on-device ML indexing to precompute the indexes that'll be used during search.

This too is doable, but it is a fairly intensive operation, and we don't want to be doing it again and again. People use Ente on multiple devices, and some people have very large photo libraries, so repeating this ML indexing on each device seems wasteful.

There's an obvious solution: we could perform the ML indexing once, on one of the devices, and then store the (end-to-end encrypted of course) indexes on the server so that when the customer signs into a new device, the app don't have to re-download all the photos and re-index them.

That is where our existing desktop face search version got stalled.

Before we can cache the indexes on the server, we would first need to ensure that both our mobile and desktop on-device ML implementations are able to reuse the same indexes interchangeably. But that is hard to guarantee before we figured out the implementation specifics for the mobile version 😑

But we felt that is not a good to wait further for the indexing format to be finalized. It is going to take some time before we iron out all the details, so we shouldn't meanwhile prevent customers of the desktop app from using atleast their locally generated indexes if they wish.

With this in mind, we're releasing the beta version of object recognition and face search in the Ente desktop app. The thought here is that this way, we can make parallel progress on all three fronts:

- Finishing the local ML on desktop,

- Finishing the local ML on mobile,

- Creating a reusable indexing format that desktop and mobile can share.

With the background laid out, let us also give a few more specifics of what the beta contains:

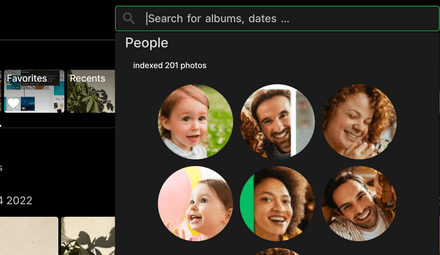

There is now an option under advanced settings in the desktop app to enable this on-device ML (beta).

If you enable it, Ente will start local analysis of your uploaded photos to create various (discardable) indexes, and will start showing some extra search options at various places in the app.

Note that it will also download all previously uploaded photos that are not present locally, to index them. So please only enable this if you are ok with bandwidth and local processing of all images in your photo library.

The current implementation does not modify or write any server side data, and will store all analysis data on your local device. As we make progress on the mobile implementation, we'll start saving these (encrypted) indexes on server so that they can be reused. In the interim though, re-indexing might need to happen if the indexing format changes.

The UI integration is not fully polished yet. We intend this to be an experimental feature and don't recommend enabling this for most customers. But the small number that do enable it will find it usable, and will also find some interesting output of the ML indexing on the info screen of a photo. As the beta moves towards a final release, we'll add finishing touches to the UI.

Note that this will use quite a bit of CPU - there are some optimizations that we know of but still have to implement - so caveat emptor. You can always turn it off in advanced settings if you feel it is interfering in your day to day use of Ente.

If you have any questions and suggestions on Ente's ML integration, or are just technically curious about edge AI, come chat with us on our Discord.